Presidential Column

A Dangerous Dichotomy: Basic and Applied Research

Douglas L. Medin

How can I be so confused by a simple distinction like the difference between basic and applied research? I did an initial draft of a column on this topic months ago, and honestly, it was mostly gibberish.

In his 1997 book, Pasteur’s Quadrant, Donald Stokes reviewed a good deal of the history and political significance of different ideas about the relation between basic and applied research. It may be worth examining our own ideas on the topic. Many of us in academia may be walking around with an implicit or explicit “basic is better” attitude. Imagine two assistant professors coming up for tenure and one has plenty of publications in Psychological Science and the other has plenty in Applied Psychological Science (a hypothetical journal). Which of the two has a better chance of getting tenure? Correct me if I’m wrong, but it seems to me that — hands down — it is the former. My academic appointment is both in psychology and in education, and at least some of my psychology colleagues look down on educational research as (merely or only) applied and justify their attitude on grounds that it is largely atheoretical and not very interesting (and on this point they simply are wrong).

But imagine that psychological science arose in a developing country that was continually facing crucial issues in health, education, and welfare, and universities were dedicated to addressing national needs. Now perhaps the assistant professor who published in Applied Psychological Science would get the nod.

In Pasteur’s Quadrant, Stokes argues for a three-way distinction between pure basic research, pure applied research, and use-inspired basic research (for which the prototype is Louis Pasteur). I do like the term use-inspired because it suggests quite literally that considerations of use can stimulate foundational research. But I’m pretty dubious about “pure” being attached to either category for the reasons that follow.

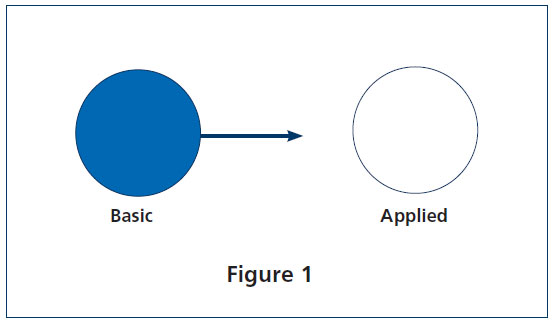

My psychology colleagues might point out that the categories basic and applied are incomplete because, by themselves, they do not capture the causal history between basic and applied research. The short version goes like this: We psychologists ask basic questions about how the mind works and achieve fundamental insights into the nature of cognitive and social processes such as judgment, perception, memory and the like. These insights have implications and applications as wide ranging as the design of cell phones, determining the optimal size of juries, stopping smoking, or mounting an effective political campaign. The path is from theory to application. People in applied settings have to do something, but the standard of evidence-based practice and knowing why something works has to wait for the underpinnings provided by basic research (see Figure 1).

My psychology colleagues might point out that the categories basic and applied are incomplete because, by themselves, they do not capture the causal history between basic and applied research. The short version goes like this: We psychologists ask basic questions about how the mind works and achieve fundamental insights into the nature of cognitive and social processes such as judgment, perception, memory and the like. These insights have implications and applications as wide ranging as the design of cell phones, determining the optimal size of juries, stopping smoking, or mounting an effective political campaign. The path is from theory to application. People in applied settings have to do something, but the standard of evidence-based practice and knowing why something works has to wait for the underpinnings provided by basic research (see Figure 1).

Of course, there are numerous steps between the initial basic research and the eventual practical applications. These steps often involve messy details and many decisions about factors that probably don’t matter, but maybe they do. One can get the sense that clean experimental design is being gradually compromised by these minor details. And it doesn’t help that the theory we are working with may have nothing to say about these decisions. Someone should do this work but, from the perspective of those of us doing basic research, maybe it should be someone else (other than us).

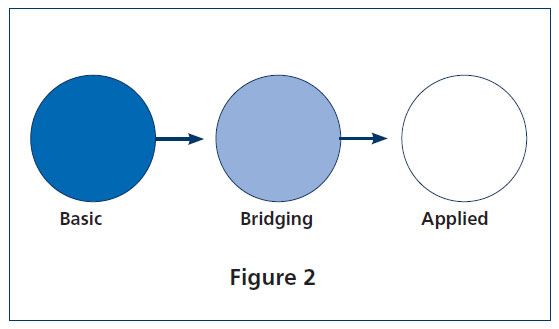

At one point in my work history this stereotype corresponded pretty well with my own attitudes. My opinion was that there was such a gulf between theory and application that we needed not two, but three subtypes of research: basic, applied, and an interface that occupies the middle ground between the two (Figure 2). Of course, if you prefer a more analytic approach rather than seat-of-the-pants intuitions, you probably can’t do better than APS Fellow and Treasurer Roberta Klatzky’s 2009 thoughtful paper on application and “giving psychology away” (borrowing from Miller, 1969).

At one point in my work history this stereotype corresponded pretty well with my own attitudes. My opinion was that there was such a gulf between theory and application that we needed not two, but three subtypes of research: basic, applied, and an interface that occupies the middle ground between the two (Figure 2). Of course, if you prefer a more analytic approach rather than seat-of-the-pants intuitions, you probably can’t do better than APS Fellow and Treasurer Roberta Klatzky’s 2009 thoughtful paper on application and “giving psychology away” (borrowing from Miller, 1969).

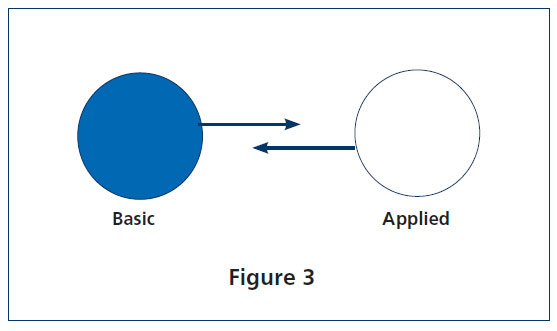

It is testimony to my selective (in)attention abilities that I was also well aware of counter-examples to Figure 2 that go the other way. Consider, for example, signal detection theory, which is arguably one of our field’s more significant accomplishments. It grew out of World War II efforts to interpret radar images and deal with communication over “noisy” channels. Psychological scientists were involved fairly early on, and the Tanner, Green, and Swets (1954) paper is a classic. The central issue of separating sensitivity to information from response bias continues to undergo theoretical development.  Signal detection theory also enjoys ever-expanding application. In short, if we are talking about causal histories, we need to include the path shown that goes from applied to basic research (Figure 3). My education colleagues would find this too obvious to mention. But it is a reminder to my psychology pals that when they ignore the application side of things, they also may be ignoring a rich source of theoretical ideas and challenges. So how about we agree to drop the pejorative connotations of the term applied research?

Signal detection theory also enjoys ever-expanding application. In short, if we are talking about causal histories, we need to include the path shown that goes from applied to basic research (Figure 3). My education colleagues would find this too obvious to mention. But it is a reminder to my psychology pals that when they ignore the application side of things, they also may be ignoring a rich source of theoretical ideas and challenges. So how about we agree to drop the pejorative connotations of the term applied research?

So then, dangerous dichotomies, such as basic versus applied research, lend themselves to stereotyping. They also create borders that may get in the way. For example, if you’re inclined to do psychological research that has high fidelity to real-world circumstances, you might be accused of doing applied research, because applied research, by definition, has to be high fidelity. But fear of fidelity is a very peculiar malady, and our field must strive to overcome it.

These categories can also be used politically in a sort of Three-Card Monte game [1] to hide values. Applied research transparently reflects a set of value judgments. There is a difference between using persuasion theory to encourage teenagers to stay in school versus encouraging them to start smoking. It is nice to be able to fall back on the argument that basic research is value neutral and that there is a pure science in the form of an uncontaminated quest for knowledge.

Nice, but in my opinion, dead wrong. If basic research were value neutral, would we even need ethical review panels? The use of nonhuman animals in research often reflects the judgment that human welfare is more important than animal welfare (we do things to animals we would never do to people). Especially important, again in my opinion, is the role of positive values in basic research. These values are reflected in the questions we choose to ask (or not ask), how we choose to ask them, who we choose to study (or not study), and who conducts the research. Although I labeled these as positive values, they become potential negatives when we fail to ask relevant questions, ask them in ways that favor one group over another, and prize ownership of science over openness. Frequently, the values in play are cultural values, values that may be different in other cultures and contexts.

For some time now, the National Science Foundation has required grant proposals to have a “broader impacts” section. To be specific, currently under discussion at NSF (see www.nsf.gov/nsb/publications/2011/06_mrtf.jsp) is the idea that projects should address important national goals, including among others, increased economic competitiveness of the United States; development of a globally competitive science, technology, engineering, and math (STEM) workforce; increased participation of women, persons with disabilities, and underrepresented minorities in STEM fields; increased partnerships between academia and industry; and increased national security. [2]

Many (but maybe not all) of these may be values that you endorse, and they may influence how you do your basic research. It’s hard to avoid the conclusion that basic research cannot shunt off the messiness of values to applied research. If we can’t continue to pretend that basic research is pure (for that matter, even purity may be a value), it might be a good idea to pay more careful attention to the values that are reflected in what we do and how we do it.

In summary, I’m still a bit confused about basic versus applied research, but the idea that research provides the opportunity to express values I care about strikes me as a good thing. Bottom line: Applied is not “merely” applied, but is full of fascinating research puzzles. Basic is not “pure,” but rather is saturated with values, ideally values that make us proud to be psychological scientists, but in any event values that merit attention.

Footnotes

[1] In this card game, the dealer shows the player a card then places it face down next to two other cards. The dealer mixes the cards around then asks the player to pick one. If the player picks the original card, he or she wins, but the dealer can employ a number of tricks (such as swapping cards) to keep the player from choosing the right card. Return to Text

[2] The response to this proposal has been sharp, bimodal criticism with some scholars arguing that the standards “water down” previously highlighted goals like fostering diversity and others objecting to these values because they would get in the way of pure, basic research. In response to this feedback, the task force charged with developing these standards is currently rethinking and revising them. Stay tuned. Return to Text

Comments

A century of outstanding psychological research has been intimately tied to so-called “applied” work, including landmark studies of prejudice, health, judgment, education, therapy, obedience, communication, influence, navigation, testing, well-being, decision-making, and so much more. Many of us in academe are not walking around with a “basic is better” attitude, because it is usually an impossible or misleading distinction. The better distinction, I think, is “revealing, novel, insightful, important” versus “ordinary, average, boring.”

Douglas Medin’s recent essay about the “Dangerous Dichotomy” serves well to illuminate the distinctions and relationships between basic and applied research –particularly the values engaged by both endeavors. As a co-founder of one of the first graduate programs to label itself Applied Social Psychology, I’ve had occasions to comment on ways of comparing basic and applied projects, and others have proposed similar comparisons, along several dimensions including: a) Purpose, to advance general knowledge about fundamental phenomena vs. to provide information that’s relevant to a practical problem; b) Validity concerns, internal vs. external and ecological; c) Setting, artificial lab vs. natural field; d) Design, experimental vs. non-experimental; e) Methods of data collection, stationary equipment vs. questionnaires; f) Participants, non-humans or college students vs. specific types of people with a stake in the outcome; g)Initiators, researchers vs. researchers plus other stakeholders; h) Who cares, (same as previous); and i)Presentation media and audiences, psychological publications vs. project reports to clientele. This list could be expanded to include other considerations such as typical sources of funding and degree of multidisciplinarity, but I believe that none of these is definitive, that any given study has some location with respect to these considerations, and that those locations can refer to basic, applied, or both. To me the most substantial comparisons involve the origin of the topic and the role of theory in a project. Basic research originates from within psychology, and the aim is to test one or more theories for verification, or to explore a topic and form a theory about it. Really applied research originates from the world outside of psychology, and the role of theory is to supply ideas about the constructs and relations among them so the researcher can conceptualize the practical problem. Basic research is mainly about formulating and testing theories; applied research uses these theories (and maybe other ideas) to develop a conceptual framework to guide a project. Of course, these comparisons, like those above, are not absolute which means that basic and applied research need not be seen as dichotomous. Instead, as suggested by Medin’s Figure 3, and Kurt Lewin’s practical theorizing, the relationship between basic and applied can be co-equal and reciprocally informative.

John David Edwards, Emeritus Professor, Loyola University Chicago

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.