Featured

Using Neuroscience to Challenge Our Eyes and Ears

The split-second distinctions made possible by neuroscience challenge common understandings of how we see and hear

Congenital amusia, a neurodevelopmental disorder that limits an individual’s ability to process subtle distinctions in pitch, is often referred to simply as tone deafness.

“A person with amusia does not hear the difference between a musical melody and a slightly mistuned version of it very well,” explained neurolinguist Caicai Zhang of the Hong Kong Polytechnic University. They may also struggle to recognize a familiar tune or, in the case of people with a subtype of amusia known as beat deafness, have difficulty synchronizing with a rhythm.

Referring to these deficits as “deafness” might suggest to a layperson that individuals with amusia are unable to hear certain tones, but the millisecond-to-millisecond analysis made possible by neuroscience tells a different story.

Amusic brains may be able to receive all of the same pitch information that more musically inclined brains do; they just may not be set up to process it as deeply.

These individuals’ ability to receive auditory input appears to be largely intact on a mechanical level, Zhang explained. Instead, her work supports the notion that amusia may arise from the brain’s reduced ability to direct conscious attention to distinguishing between similar tones.

That is, amusic brains may be able to receive all of the same pitch information that more musically inclined brains do; they just may not be set up to process it as deeply. In fact, some studies have even found that people with amusia can imitate pitch patterns that they cannot consciously perceive.

“[Amusic brains] can actually track the pitch changes as well as typical listeners who are not paying attention to the pitch stimuli, but these brains become dysfunctional when they have to pay attention to and actively detect the pitch changes,” Zhang said of the distinction.

Noting notes

The difference comes down to whether amusia influences conscious or unconscious processes in the brain, Zhang and colleagues Gang Peng, Jing Shao, and William S.-Y. Wang (Hong Kong Polytechnic University and Shenzhen Institute of Advanced Technology, China) wrote in a 2017 Neuropsychologia article. This is the kind of split-second distinction that neuroscience is uniquely equipped to address.

“As with any other neurodevelopmental disorder, to fully understand amusia we need to look beyond the behavioral symptoms and probe into the neural substrates,” Zhang said. “Given the excellent spatial and temporal resolution of functional fMRI [functional magnetic resonance imaging] and EEG [electroencephalogram], they are especially suited for understanding ‘where’ and ‘when’ the impairment occurs in the brain of people with amusia.”

“As with any other neurodevelopmental disorder, to fully understand amusia we need to look beyond the behavioral symptoms and probe into the neural substrates.”

Caicai Zhang (Hong Kong Polytechnic University)

Previous research with speakers of nontonal languages, such as English, has found amusia’s tonal deficits to be reflected in reduced activity in these individuals’ right inferior frontal gyrus, an area often associated with music processing. But comparatively little work has investigated how amusia manifests in the brains of tonal-language speakers, wrote Zhang and colleagues.

Pitch processing is particularly essential for individuals who speak tonal languages such as Cantonese and Mandarin, Zhang and colleagues explained, in which words are distinguished not only by their pronunciation but by their pitch (high, mid, or low; rising, flat, or falling). The Cantonese word for “doctor,” for example, is spoken with a high tone; pronounced the same way and spoken with a low tone, the word becomes “second.” Amusia may present differently in the brain for speakers of tonal languages than for speakers of nontonal languages.

Zhang and colleagues investigated pitch processing in their 2017 fMRI study of 22 Cantonese speakers, half of whom had amusia. While the researchers observed them under fMRI, the participants heard a set of three Cantonese words distinguished only by their contrasting tones (“doctor,” “meaning,” and “second”) and a set of three piano notes that matched the pitch of those words.

In response to these linguistic and musical stimuli, participants with typical pitch-processing abilities exhibited activity in the right superior temporal gyrus (an area associated with language processing) and cerebellum (an area associated with habituation to stimuli). Individuals with amusia, on the other hand, did not exhibit such activity. Additionally, the first group of participants exhibited reduced activity in the right middle frontal gyrus (an area associated with reorienting attention) and precuneus (an area associated with episodic memory and self-consciousness) when the stimuli were repeated. By comparison, amusic individuals’ activation remained unusually strong, as if they were hearing the words and tones for the first time.

“This seems to suggest a deficit in attending to repeated pitch stimuli, or encoding repeated pitch stimuli into working memory,” Zhang and colleagues wrote.

Amusia and attention

In another study, published in NeuroImage: Clinical in 2019, Zhang and Shao further investigated how amusia influences attention and memory by studying how Cantonese speakers with and without the condition account for variation in different individuals’ speech. This ability, referred to in the context of speech as talker normalization, allows listeners to use the relative differences between tones to recognize the phonemes, or units of sound, that make up a language despite differences in individual speakers’ vocal ranges. This ability is particularly important for speakers of tonal languages, but it also allows speakers of any language to, for example, recognize a song when it is played in a different key, Zhang and colleagues explained.

In this study, 48 participants, half with amusia, listened to recordings of single individuals and alternating pairs of speakers reading the same three tonally distinct Cantonese words used in the previous study. Participants were tasked with pressing a button each time they detected a tonal change. During the task, the electrical activity in their brains was monitored by EEG for a period of about 800 milliseconds.

As expected, participants with amusia were less accurate at identifying tonal changes. The pattern of their brain activity, or event-related potentials, in response to the linguistic stimuli also differed noticeably from that of typical listeners. Participants in both groups showed significant activity during the first time range, when listeners are thought to engage in auditory processing. From there, participants with amusia were slower to exhibit their next burst of activity, when listeners are thought to begin directing conscious attention toward novel stimuli, and then exhibited reduced activity during time ranges associated with attentional skills and categorization.

“These findings are largely consistent with the hypothesis that amusics are relatively intact in early auditory processing, and are primarily impaired in later, conscious perceptual evaluation or categorization of pitch stimuli,” Zhang and Shao wrote.

Feedback, feedforward

Amusia appears to reduce sensitivity to pitch at least in part by hindering the brain’s ability to store and recall tones it has experienced before. A typically functioning brain uses information about previous sensory experiences to make moment-to-moment predictions about current experiences in order to process inputs more efficiently.

“The brain does more than adapt to repeated inputs,” explained André M. Bastos (Massachusetts Institute of Technology) and colleagues in a 2020 study published in the Proceedings of the National Academy of Sciences. “A wide variety of evidence indicates that it makes mental models of the world that actively generate predictions.”

“The brain does more than adapt to repeated inputs . . . A wide variety of evidence indicates that it makes mental models of the world that actively generate predictions.”

André M. Bastos (Massachusetts Institute of Technology) and colleagues

This process, known as predictive coding, allows the brain to inhibit the processing of expected stimuli while “feeding forward” stimuli that violate those predictions for further processing. This additional processing of unpredicted stimuli, known as prediction errors, allows the brain to update its mental models. In the 2020 study, Bastos examined the neural mechanisms involved in processing predictions by implanting laminar neural recording probes in the brains of two macaque monkeys that had been trained to perform a simple visual matching task.

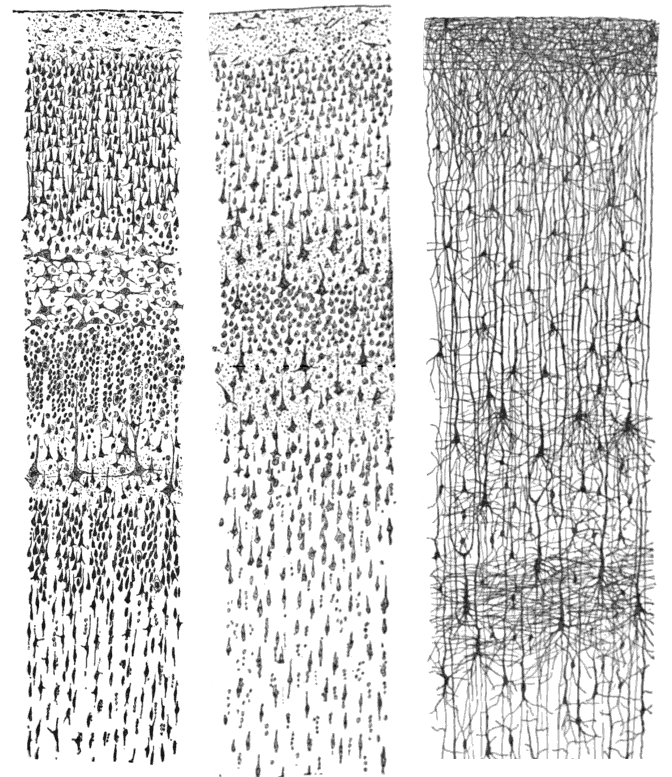

Laminar neural recording allows researchers to monitor electrical activity at different frequencies in the brain by implanting thin metal probes through the cortex. The probes can then detect the flow of electrical activity within the “microcircuit” formed by the cortex’s layers as well as activity coming into the cortex from sensory receptors and in or out of other areas of the brain involved in higher-level processing.

In the case of the visual cortex, Bastos and colleagues explained in a 2015 study published in Neuron, neural recording reveals a pattern of activity in which the superficial top layer of the cortex sends “feedforward” sensory information from the eyes in the form of gamma waves (40–90 Hz) to meet a prediction sent from the bottom layers of the cortex using information from higher visual areas of the brain, in the form of alpha/beta waves (8–30 Hz).

In their 2020 study, Bastos and colleagues found that when incoming sensory information did not match a prediction, the discrepancy (or “error”) generated more neuronal spiking and high-frequency gamma waves, through which the sensory info was sent for further processing elsewhere in the brain. When stimuli fit with the expected pattern—that is, when the brain’s prediction was found to be correct—Bastos and colleagues instead detected more low-frequency alpha/beta waves in the cortex’s bottom layers, indicating a top-down process that inhibited the production of gamma waves and, thus, further processing of the stimuli. In this case, the macaque could be said to be “seeing” its prediction, not what was really in front of it.

“The brain exploits predictability . . . It makes cortical processing more efficient.”

André M. Bastos (Massachusetts Institute of Technology) and colleagues

“When stimuli are predictable, these rhythmic, layer-specific mechanisms prepare and inhibit columns in the sensory cortex that process the predicted stimulus,” Bastos and colleagues wrote. “In the absence of these pathway-specific prediction signals, sensory samples receive stronger processing.”

This suggests that there are not specialized circuits for computing prediction errors in the brain, the researchers noted. Instead, predictive routing uses the same cortical circuitry regardless of whether a visual input requires additional processing. When a prediction is correct, the alpha/beta waves are able to inhibit further processing because the prediction is routed through the same circuitry that would otherwise have processed unexpected information. Bastos and colleagues refer to this model “predictive routing”. The central idea is that the brain optimizes information processing by reducing neural traffic along predictable routes.

“The brain exploits predictability,” the researchers explained. “It makes cortical processing more efficient.”

The brain is always adjusting the degree to which it relies on prediction and updating its mental model of the world, Bastos said. In a highly familiar environment, predictions are likely sufficient to guide us with only occasional updates from our surroundings. But in more dynamic or new environments, he continued, we need to rely less on predictions and more on bottom-up sensory data, otherwise we risk mistaking an inaccurate model of the world for reality. An overly tight and negative internal model of the world may underlie brain disorders such as depression, for example, Bastos explained. Future research may help uncover whether drugs and other therapies can help people to shift the balance of how their brain relies on predictions and sensory inputs, as well as the extent to which this process can be consciously controlled.

Feedback on this article? Email [email protected] or scroll down to comment.

Published in the print edition of the March/April issue with the headline “Secrets of the Senses.”

References

Bastos, A. M., et. al. Visual areas exert feedforward and feedback influences through distinct frequency channels. Neuron, 85(2), 390-401. https://doi.org/10.1016/j.neuron.2014.12.018

Bastos, A. M., Lundqvist, M., Waite, A. S., Kopell, N. & Miller, E. K. (2020). Layer and rhythm specificity for predictive routing. Proceedings of the National Academy of Sciences, 117(49), 31459-31469. https://doi.org/10.1073/pnas.2014868117

Hutchins, S. & Peretz, I. (2012). Amusics can imitate what they cannot discrimiate. Brain and Language, 123(3), 234-239. https://doi.org/10.1016/j.bandl.2012.09.011

Kacie Dougherty. (2018, August 11). Laminar neural recordings with electrophysiology. [Video]. Youtube. https://www.youtube.com/watch?v=TyP9MQXXH1Q

Zhang, C., Peng, G., Shao, J. & Wang, W. S. Y. (2017). Neural bases of congenital amusia in tonal language speakers. Neuropsychologia, 97, 18-28. https://doi.org/10.1016/j.neuropsychologia.2017.01.033

Zhang, C. & Shao, J. (2019). Talker normalization in typical Cantonese-speaking listeners and congenital amusics: Evidence from event-related potentials. NeuroImage: Clinical. https://doi.org/10.1016/j.nicl.2019.101814

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.