Featured

PsyCorona: A World of Reactions to COVID-19

How an online data visualization tool reports data from an international psychological survey

The COVID-19 pandemic and corresponding lockdowns have prompted one of the greatest mass disruptions to civil life in modern history. The potential psychological consequences of facing a virus without a vaccine are vast; some may be immediately evident, while others may manifest over time. The psychological impact of the pandemic may also vary across culture and context. Although psychological research and theories could help to explain responses to COVID-19 (Bélanger, 2020; Van Bavel et al., 2020), the last global virus event of this magnitude—the 1918 flu pandemic—occurred when empirical psychology was still at an early stage. As COVID-19 began its spread, it became clear that psychological science might benefit from a globally oriented study that could offer some insight into which reactions were universal and which were unique to certain regions and cultures.

In March 2020, a collaboration of over 100 researchers pooled available resources to launch a rapid international survey with the goal of creating a historical record of certain psychological and behavioral responses to the pandemic. The ongoing study incorporates cross-cultural, longitudinal, and integrative data science methods to maximize the scientific value and re-use potential of the data. In addition to assessing regional demographics, psychological data, and metadata, the survey assesses key behaviors such as frequency of leaving the home and tendencies toward physical distancing. Further information about this research is provided on the project website and in a PsyCorona preview article in the September 2020 Observer.

Approximately 60,000 respondents completed the initial survey, which was available in 30 languages. Given early indications that age and gender were likely vulnerability factors (Centers for Disease Control and Prevention, 2020; Wenham et al., 2020), the sample included 20 national subsamples representative of population age/gender distributions. After completing the survey, respondents could sign up to be contacted for follow-up surveys that would continue through the initial lockdowns and into an anticipated second wave of the virus in the fall or winter. The longitudinal research will continue through 2020.

The User Experience

To develop this interactive Web application, the members of the PsyCorona Collaboration focused on a set of principles and characteristics to ensure a good user experience.

• Trust and transparency: The sections “The Sample” and “Data” aim to offer transparency about our sample as well as our data protection, preparation, and sharing. We provide full question wording where possible and provide explanations in a manner that is accessible to researchers, practitioners, and participants alike.

• Clarity and robustness: To ensure the validity of the conclusions drawn from the data and to safeguard against sample artifacts, users can conduct some basic checks. One bias might be introduced by the sampling method and survey dissemination. To safeguard against sampling biases, users can toggle between viewing the full sample or only the age/gender-representative samples. Another bias might arise from differences between countries in how people respond to survey questions (e.g., Gelfand et al., 2002). One conservative method to adjust for potential cultural response biases is to assess within-person standardized scores. The “Transformation” button converts the data to such scores.

• User autonomy: We aim to facilitate interactive exploration. We created a curated selection of variables and anonymized the data set, but we have not curated the output or how it is interpreted. Whereas traditional scientific publications tend to focus on specific patterns in the data, users are free to examine their patterns of interest and interpret the output independent of the views of the developers. Users may pursue a targeted question-answer approach (i.e., the three-step approach offered above) or engage in free exploration and trial-and-error approaches.

The PsyCorona Data Visualization Tool

Alongside PsyCorona’s scientific mission is its crisis-oriented mission to provide fast and openly accessible information relevant to the present pandemic. Given that the academic publication process can be slow, we sought to provide a more immediate way to access portions of the data. In close collaboration with the University of Groningen’s Center for Information and Technology, we built a secure, anonymous, Web-based data visualization tool that lets users easily examine key variables. Although members of the PsyCorona collaboration are also developing scientific articles for peer review, users are welcome to interact directly with the tool’s country-level data.

The purpose of this data visualization tool is twofold. First, it serves as a resource for researchers, analysts, and practitioners to understand people’s thoughts, feelings, and responses to the coronavirus as well as the extraordinary societal measures taken against it. Such knowledge could provide pilot data for researchers, inform current policies to contain the pandemic, or help society prepare for similar events in the future. Second, it serves as a test case for how psychological scientists can use data visualization to engage the public and share results with respondents. Tens of thousands of respondents invested time and effort to share their experiences, and the app affords them access and agency over the data (Tuck, 2009) as well as an interactive experience of how data can be used (e.g., Van der Krieke et al., 2016).

An up-to-date version of the tool can be accessed via our project website. Three information sections (“About,” “Data,” and “Take the Survey Now”) generally describe what PsyCorona is all about, where the data come from, and how to access the survey. There are also three data presentation sections (“The Sample,” “Psychological Variables,” and “Development”), which aim to facilitate a three-step approach: evaluation, examination/exploration, and validation.

1. Evaluation: The sections “The Sample” and “Psychological Variables” let users first check whether the data are relevant to their interests and questions.

2. Examination/exploration: The “Psychological Variables” and “Development” sections let users visualize psychological trends. Here are some examples:

- Country averages (e.g., “In the United States, how many days per week did people have in-person contact with others outside the home?”, “Were people more anxious in Italy or Spain?”)

- Basic relationships between variables (e.g., “Did Saudi Arabia’s relatively strict community rules correspond with more physical distancing?”, “Did countries with more community organization also have a higher sense of efficacy to mitigate the virus?”)

- Differences over time (e.g., “Did respondents in Brazil report an increase or a decline of trust in the government to fight COVID-19?”, “Did feelings of depression develop differently between certain Eastern and Western societies?”)

3. Validation: Users can customize the aspects of the sample they wish to view and whether to adjust for certain cross-cultural biases in survey responses. The “Sample Selection” lets users switch between viewing either the full sample or only the 20 national subsamples with representative age/gender distributions. The “Transformation” button allows users to control for national response styles—cultural tendencies to give higher or lower scores across all Likert-type scales in the survey (Gelfand et al., 2002).

A Brief User’s Guide

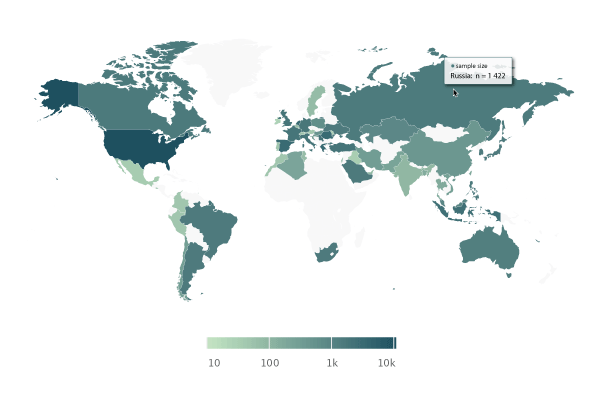

Users can examine different aspects of the data via three main sections: “The Sample,” “Psychological Variables,” and “Development.” “The Sample” provides information on gender, age, education, political orientation, and preferred language. Sample sizes also varied by country and region, so this panel lets users determine whether their countries and/or demographic groups of interest are adequately represented in the data (Figure 1).

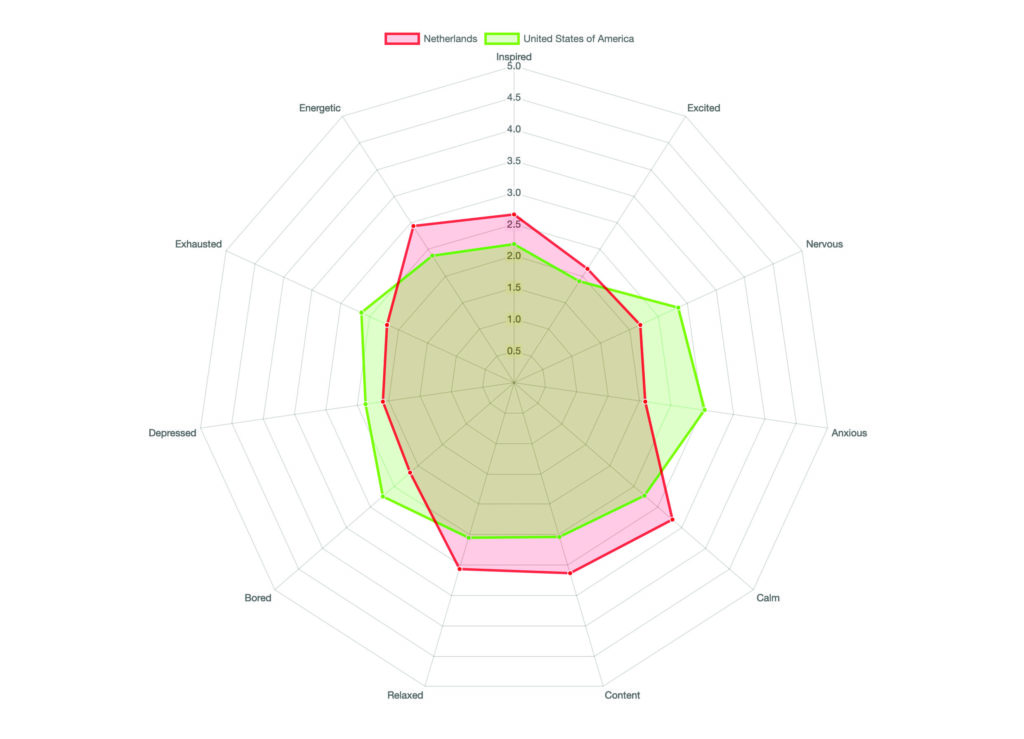

“Psychological Variables” gives access to a curated selection of different psychological variables, separated by country. This selection includes virus-relevant beliefs and attitudes, emotions and affect, attitudes toward the government and society, and self-reported behaviors relevant to the pandemic. For each variable, we provide country-level information on the central tendency (i.e., mean) and, where possible, measures of uncertainty around that value (e.g., a confidence interval). Users can examine and compare different psychological variables within one country, across multiple countries, or as global averages (e.g., “Did people in the United States, on average, have stronger positive or negative emotions than people in the Netherlands?”; Figure 2).

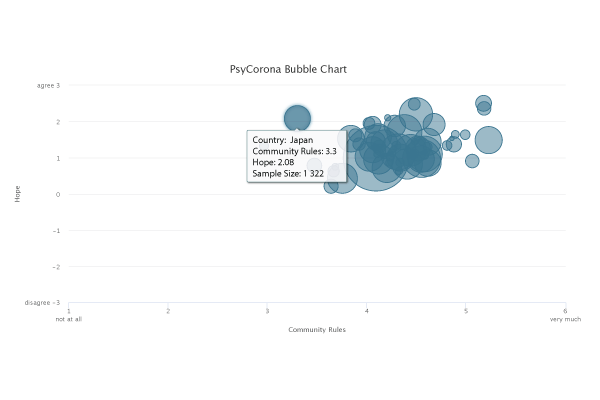

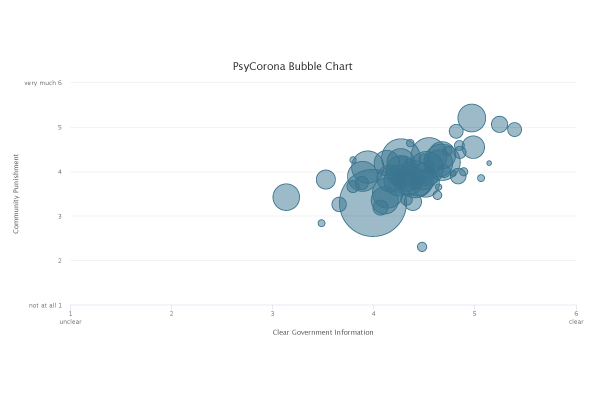

Further within the “Psychological Variables” section, users can find “Cross-Domain Relationships.” This subsection lets users plot two psychological variables against each other to visualize their relationship. Users can thus isolate unique, country-specific effects or notice global patterns, which in turn sets the basis for further inquiry. For example, Figure 3 illustrates a country-specific effect in which respondents in Japan report having relatively weak community rules around virus containment yet still maintain high hopes that the coronavirus situation will improve. Figure 4 illustrates an example of a global trend—one might wonder why, in countries where people report receiving clearer information from the government about the virus, they also report that their communities punish those who deviate from rules more. These patterns can form the basis for new insights and more targeted questions. Note that, to protect the privacy of our participants, the functionality of this subsection remains quite basic, but it may nevertheless help to initiate or support pandemic-related research—and identify important knowledge gaps.

Finally, the “Development” section allows users to look at country responses at different points in time. This allows for questions about a single variable (e.g., “Did trust in the Turkish government to fight COVID-19 remain consistent across points in time, or is the pattern more complex?”), along with questions about variable co-developments within a country or region (e.g., “In Indonesia, was the frequency of in-person social contacts preceded or followed by changes in hope that the COVID-19 situation would improve?”). Users can also see whether different countries showed different developments over time (e.g., “Did conspiracy beliefs develop similarly in the United Kingdom and the United States?”).

Altogether, this data visualization tool offers a glimpse into how people, across cultures and contexts, have reacted and responded to the COVID-19 pandemic as it has impacted them. It is meant to serve as a resource for researchers and practitioners to refine their work or to identify potential target points for intervention. It also serves to promote public engagement, with an eye toward communicating data in a way that affords personal agency. However, we caution users to bear in mind the uncertainty surrounding these data: As with all psychological research, the samples and measures can have important limitations, and any preliminary findings from this tool should be robustly investigated before firm conclusions are drawn.

References

Bélanger, J. (2020, May 11). How behavioral science can help contain the coronavirus: A new global survey could help us understand why some people follow the rules for avoiding COVID-19 and others don’t. Scientific American. https://blogs.scientificamerican.com/observations/how-behavioral-science-can-help-contain-the-coronavirus/

Centers for Disease Control and Prevention. (2020). COVID-19 guidance for older adults. https://www.cdc.gov/aging/covid19-guidance.html

Gelfand, M. J., Raver, J. L., & Ehrhart, K. H. (2002). Methodological issues in cross-cultural organizational research. In Y. Editor & X. Editor (Eds.), Handbook of industrial and organizational psychology research methods (pp. 216–241). Publisher Name. https://doi.org/10.1002/9780470756669.ch11

Tuck, E. (2009). Re-visioning action: Participatory action research and indigenous theories of change. Urban Review, 41(1), 47–65. https://doi.org/10.1007/s11256-008-0094-x

Van Bavel, J. J., Baicker, K., Boggio, P. S., Capraro, V., Cichocka, A., Cikara, M., Crockett, M. J., Crum, A. J., Douglas, K. M., Druckman, J. N., Drury, J., Dube, O., Ellemers, N., Finkel, E. J., Fowler, J. H., Gelfand, M., Han, S., Haslam, S. A., Jetten, J., . . . Willer, R. (2020) Using social and behavioural science to support COVID-19 pandemic response. Nature Human Behavior, 4, 460–471. https://doi.org/10.1038/s41562-020-0884-z

Van Der Krieke, L., Jeronimus, B. F., Blaauw, F. J., Wanders, R. B. K., Emerencia, A. C., Schenk, H. M., De Vos, S., Snippe, E., Wichers, M., Wigman, J. T. W., Bos, E. H., Wardenaar, K. J., & De Jonge, P. (2016). HowNutsAreTheDutch (HoeGekIsNL): A crowdsourcing study of mental symptoms and strengths. International Journal of Methods in Psychiatric Research, 25(2), 123–144. https://doi.org/10.1002/mpr.1495

Wenham, C., Smith, J., Morgan, R., & Gender and COVID-19 Working Group. (2020). COVID-19: The gendered impacts of the outbreak. The Lancet, 395(10227), 846–848. https://doi:10.1016/S0140-6736(20)30526-2

PsyCorona Collaborators: Managers: Jocelyn J. Bélanger (co-principal investigator), Elissa El Khawli, Ben Gützkow, Bertus Jeronimus, Maja Kutlaca, Edward Lemay, Anne Margit Reitsema, Jolien Anne van Breen, Caspar J. Van Lissa, Michelle R. vanDellen. Developers: Hung Chu, Nicoletta Giudice, Joshua Krause, Leslie Zwerwer. Researchers: Georgios Abakoumkin, Jamilah H. B. Abdul Khaiyom, Vjollca Ahmedi, Handan Akkas, Carlos A. Almenara, Anton Kurapov, Mohsin Atta, Sabahat Cigdem Bagci, Sima Basel, Edona Berisha Kida, Anna Bertolini, Nicholas R. Buttrick, Phatthanakit Chobthamkit, Hoon-Seok Choi, Mioara Cristea, Sára Csaba, Kaja Damnjanovic, Ivan Danyliuk, Arobindu Dash, Daniela Di Santo, Karen M. Douglas, Violeta Enea, Daiane Gracieli Faller, Gavan Fitzsimons, Michele Gelfand, Alexandra Gheorghiu, Ángel Gómez, Qing Han, Mai Helmy, Joevarian Hudijana, Ding-Yu Jiang, Veljko Jovanovic, Željka Kamenov, Anna Kende, Shian-Ling Keng, Tra Thi Thanh Kieu, Yasin Koc, Catalina Kopetz, Kamila Kovyazina, Inna Kozytska, Arie W. Kruglanski, Nóra Anna Lantos, Cokorda Bagus Jaya Lesmana, Winnifred R. Louis, Adrian Lueders, Najma Iqbal Malik, Anton Martinez, Kira McCabe, Mirra Noor Milla, Idris Mohammed, Erica Molinario, Manuel Moyano, Hayat Muhammad, Silvana Mula, Hamdi Muluk, Solomiia Myroniuk, Reza Najafi, Claudia F. Nisa, Boglárka Nyúl, Paul A. O’Keefe, Jose Javier Olivas Osuna, Evgeny N. Osin, Joonha Park, Gennaro Pica, Antonio Pierro, Jonas Rees, Elena Resta, Marika Rullo, Michelle K. Ryan, Adil Samekin, Pekka Santtila, Edyta Sasin, Birga Mareen Schumpe, Heyla A Selim, Courtney Soderberg, Michael Vicente Stanton, Wolfgang Stroebe, Samiah Sultana, Robbie M. Sutton, Eleftheria Tseliou, Akira Utsugi, Anne Marthe van der Bles, Kees Van Veen, Alexandra Vázquez, Robin Wollast, Victoria Wai-Lan Yeung, Somayeh Zand, Iris Lav Žeželj, Bang Zheng, Andreas Zick, Claudia Zúñiga.

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.