Member Article

Can We Measure Journal Quality?

Thomson Reuters (formerly the Institute of Scientific Information or ISI, which I will use for short) recently released the 2009 impact data for journals in psychology, and I have found myself reading many messages about them from various correspondents. The 2009 journal impact factor (IF) is the mean number of times articles published in a journal in 2007 and 2008 were cited in 2009. So the total number of citations in 2009 of papers from 2007 and 2008 in that journal (A), divided by the number of papers published over the preceding 2 years (B), results in the impact factor (A/B) for the journal. For example, the 2009 measure for Psychological Science is 5.090, so the papers published in 2007 and 2008 were cited an average of 5 times (and a tad) in 2009.

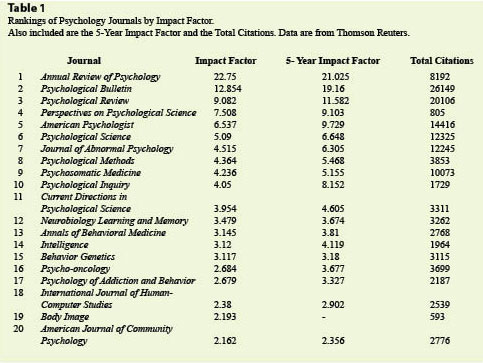

I am chair of the APS Publications Committee, so I watch the ratings of our journals with interest. All our numbers were up this year, which is great. Because these journals cover the entire field of psychology, ISI places them in a category called “Multidisciplinary Psychology Journals” of which there are 111. Remarkably, APS has 3 journals in the top 11 in this category for 2009: Perspectives on Psychological Science ranked 4th, Psychological Science ranked 6th, and Current Directions in Psychological Science was 11th (see Table 1 for the first 20 journals in this category ranked by IF). Of course, we have an excellent fourth journal — Psychological Science in the Public Interest — that ISI does not rank for various reasons, mostly having to do with frequency of publication. If ISI ever rates it, I feel sure it will also be quite high. In addition to the standard (2-year) IF, the ISI also calculates a 5-year IF and other data, such as total number of citations in 2009 for papers in the previous two years. These data are included in Table 1.

A few interesting facts may be gleaned from the data. First, review journals (and hence review articles) are the most highly cited in our field. The top 5 journals are all review journals; perhaps the surprise is that Perspectives, APS’s newest journal, has already broken into this elite circle. Second, Psychological Science is the most cited journal in this “multidisciplinary” category that publishes empirical articles. In fact, in a separate analysis I received of strictly empirical psychology journals, Psychological Science was Number 1. Because Psychological Science publishes a relatively large number of articles (407 in 2007 and 2008), its denominator is much larger than for other journals that publish few articles but still have high impact factors. For example, Cognitive Psychology came in 9th in this list of empirical journals with an IF of 4.12, but it published only 41 papers in that 2-year span, so its total impact (total citations) is much smaller. Third, researchers at ISI are a bit shaky on categorizing psychology journals. Assuming that “multidisciplinary” journals are supposed to cover many areas of the field (like Psychological Bulletin or Psychological Science), how exactly did Neurobiology of Learning and Memory, Behavior Genetics and Psycho-oncology (among others) get into this list? As far as I have been able to discern, the classification process is not explained.

I applaud our hard working APS editors (and authors) for the fact that we have high impact journals. Bravo! It is a great feat for these journals and for APS. We do not publish many journals, but they rank highly among their peers.

Of course, the impact factor is not without its critics. Yes, it is an objective measure, something we are all supposed to like, but as with all measures, people quibble about how it is calculated and what it means. If you explore the topic on Google, you can find dozens of articles critical of the IF, either in general or for particular fields. Even the Wikipedia entry lists several common criticisms. Like most rating systems, the journal impact factors captures some qualities of journals and may miss other features.

The impact factor is a rough gauge, but some articles in journals with moderate impact factors have become highly cited, and conversely many articles in high impact journals are not much cited. In the part of our intellectual world that I inhabit, the Journal of Memory and Language has a respectable but not stellar IF (3.221 for 2009), which might suggest to the uninitiated it does not publish high impact papers and that they should submit somewhere else. But it does: Fergus Craik and Robert Lockhart published a paper in 1972 that has been cited 3,388 times (about 90 times per year); my colleague Larry Jacoby published a paper in that journal in 1991 that has now been cited 1,545 times (about 84 times per year). And those are just two examples of many. (Citation data reported in this article are from the Institute of Scientific Information database as of mid-July 2010.)

The point is that the correct unit of analysis when considering citations is usually the individual paper (or researcher), not the journal in which it was published.

Almost by definition, most papers will not have great impact, even if they are published in Psychological Science or Psychological Review. The great majority of papers in even the highest impact journals are not much cited. How can that be, you ask?

The answer has to do with distributions of citation data. For one reason or another, I have examined citation data (for journals, for individuals, for departments) over many years. Psychologists usually like to think that behaviors generated by human beings will arrange themselves along a normal curve. That might be a correct assumption (at least approximately) for many purposes, but citation distributions are never normal. They are always strongly skewed to the right (i.e., a positive skew). In the case of articles in journals, it turns out that most papers are not much cited, but a few are cited a lot. In addition, most researchers are not cited much, but a few are cited a lot. Even the papers of “highly cited psychologists” — those researchers out on the right tail of the distribution — are positively skewed; that is, most of the papers of highly cited people are not much cited, but a few papers are cited a huge number of times. Highly cited researchers usually publish a lot.

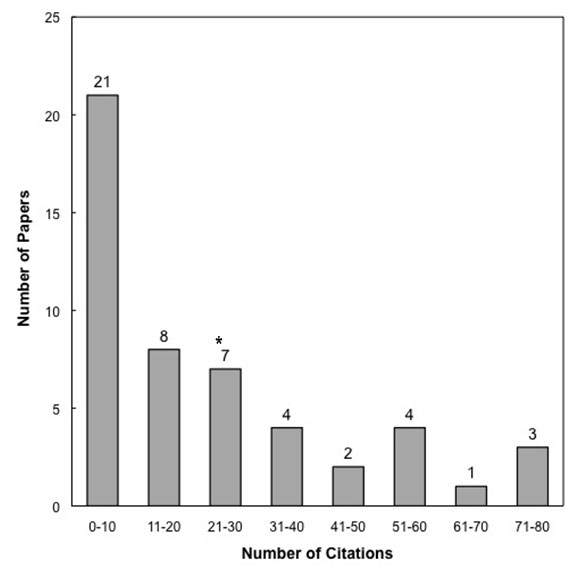

Figure. The data represent the frequency of citations to the 50 articles published in Perspectives on Psychological Science during its first 2 years of publication (2006 and 2007).

To illustrate this issue as it affects journal impact factors, consider the frequency distribution in the accompanying figure. The data are the citations from the Thompson ISI (as of July 9, 2010) for the articles appearing in Perspectives in Psychological Science over its first 2 years of publication (22 articles were published in 2006 and 28 in 2007, so 50 are represented in the figure). How to measure the central tendency for such a distribution? Obviously, it has a strong rightward skew.

The mode of the distribution is 0, because six papers have not been cited at all. The median is 12. However, the mean of the distribution is 22.6, indicated (roughly) by the asterisk on the figure. Of course, the data in the figure do not represent an impact factor (they are citations over several years for articles published in 2006 and 2007; the impact factor would have been calculated for these years from citations in only 2008). Still, the figure illustrates the kind of distribution that arises from citation data on articles — one strongly skewed to the right. The impact factor is greatly swayed by a few papers that are very highly cited, and most papers (in whatever journal) are not much cited. Three papers in Perspectives from those years have already been cited between 70 and 80 times, pulling up the mean. Keep in mind that Perspectives is near the top of the pack, too, as shown in Table 1.

The fact that impact factors are based on the means of highly skewed distributions is one common criticism of the measure. Does that really matter? I doubt it, because when I look at other measures of “journal quality” my impression is that they correlate highly (though far from perfectly) with impact factors.

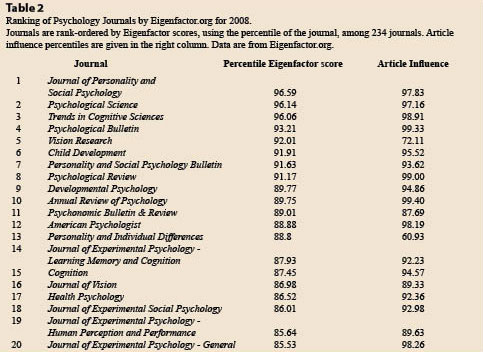

Several other measures of journal quality are now available. One promising new measure is called the Eigenfactor score. The Eigenfactor score for a journal is “a rating of the total importance of a scientific journal,” according to Wikipedia. The claims are more modest if you consult Eigenfactor.org, the group that actually computes the scores and makes them freely available. The Eigenfactor.org website says the score is “a measure of the overall value provided by all of the articles published in a given journal in a year.” The basic technique for calculating Eigenfactor scores is similar to Google’s PageRank algorithm (go to Eigenfactor.org if you want more information). According to the Wikipedia article, “Eigenfactor scores are intended to give a measure of how likely a journal is to be used, and are thought to reflect how frequently an average researcher would access content from that journal.”

The Eigenfactor score (unlike the impact factor) is driven in part by the size of the journal (journals that publish more articles will have higher scores, just as they have higher total impact). However, Eigenfactor.org also publishes an article influence score, which scales the Eigenfactor score according to the number of articles published in the journal and thus is more comparable to the impact factor.

Another great feature of both measures is that Eigenfactor.org provides helpful percentile rankings of journals. Out of 234 psychology journals ranked in 2008, Psychological Science ranked 2nd or in the 96.1 percentile. The Journal of Personality and Social Psychology ranked first in psychology, with a 96.6 percentile (the percentiles refer to all journals in science and social science). Table 2 reports the top 20 psychology journals in terms of Eigenfactor scores. Current Directions in Psychological Science ranked 24th (83.9 percentile), and the other two APS journals are not yet ranked. I also include the article influence scores for these journals in the table. By the way, the Journal of Memory and Language ranked 21st, with an 84.7 percentile (and was even higher in the article influence score).

Another promising measure is the h-index (see my Observer column from April, 2006, when that measure was relatively new). The original h-index was intended to assess the impact of researchers; h is the number of articles that a person has published that have been cited h times (so, an h of 40 means that the person has 40 articles cited 40 times or more). However, one can calculate h for a journal as for an individual; h can also be calculated for a specified period of time, too, for comparisons (e.g., for a decade). For example, the h for Psychological Science for the past 5 years is 35 (that is, in the past 5 years, 35 articles have been cited 35 times or more); its 10-year h is 87. The values for Current Directions are 23 and 48 for 5- and 10-year periods, respectively. The 5-year value for Perspectives is 24. After looking haphazardly at some other journals, I can opine that these numbers for APS journals are quite respectable, and the Psychological Science values are quite high. Psychological Bulletin’s 5- and 10-year h values are 39 and 106, and those are the highest I found in my admittedly haphazard research.

Ranking of journals in various ways measures something worthwhile, but no single measure should be regarded as “the” right one. IF has existed the longest and thus is most established, and certainly it is useful as a quick assessment for some purposes. However, I do fear that overreliance on one measure for decisions regarding promotion, tenure, grant applications or other evaluative purpose may be harmful. At least for established investigators, the critical issue is the quality of the individual’s research and the focus should be on that quality (its importance, its rigor, its insightfulness, its impact). These features may not be perfectly correlated with the impact factor of the journals in which the research is published. Of course, for younger faculty whose work has not had time to make its mark in citations and other indices, tenure and promotion committees may take the quality of journals as a proxy (even if an imperfect one) for the likely quality and importance of the work.

We certainly would not want too much to turn on impact factor for psychologists as compared to other fields. For reasons I do not well understand, impact factors for psychology journals are quite low relative to those of some other scientific fields. It turns out that impact factors in biological sciences (including neuroscience) generally outpace IF in other sciences. Perhaps I shall write more on that topic in another column.

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.