Out of the Box and Into the Lab, Mimes Help Us ‘See’ Objects That Don’t Exist

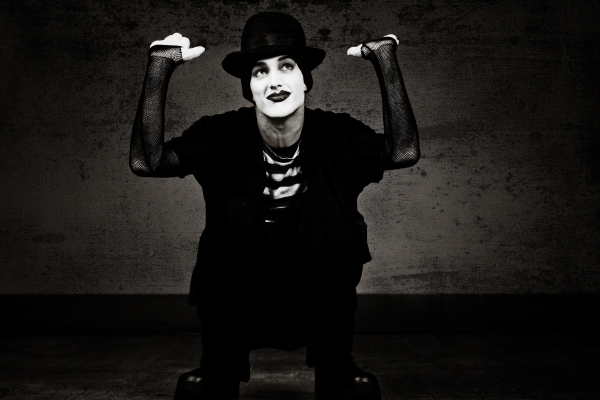

When we watch a mime seemingly pull on a rope, stumble over an obstacle, or push against the sides of a transparent box, we don’t struggle to recognize the implied objects—our minds automatically construct vivid representations of them, even though they are not actually seen.

To explore how the mind processes and identifies these fictitious objects, researchers brought the art of miming into the lab. Their results reveal that humans mentally represent invisible, implied surfaces both rapidly and automatically.

Chaz Firestone ( Johns Hopkins University) and Pat Little (New York University) talk with APS’s Charles Blue about this research in a new episode of Under the Cortex, the APS podcast.

“Most of the time, we know which objects are around us because we can just see them directly. But what we explored here was how the mind automatically builds representations of objects that we can’t see at all but that we know must be there because of how they are affecting the world,” said Chaz Firestone, an assistant professor who directs Johns Hopkins University’s Perception & Mind Laboratory and the senior author on a paper published in the journal Psychological Science. “That’s basically what mimes do. They can make us feel like we’re aware of some object just by seeming to interact with it.”

To study this phenomenon, Firestone and his colleagues had a group of 360 participants watch clips in which Firestone himself mimed colliding with a wall and stepping over a box in a way that suggested those objects were there, only invisible.

Afterward, a black line appeared in the spot on the screen where the implied surface would have been. This line could be horizontal or vertical, so it either matched or didn’t match the orientation of the surface that had just been mimed. Participants had to quickly identify the line’s orientation. The researchers found that people responded significantly faster when the line aligned with the mimed wall or box, suggesting that the implied surface was already represented in their minds—so much so that it affected their responses to the visible surface they saw immediately after.

Participants had been told not to pay attention to the miming, but they couldn’t help but be influenced by those implied surfaces, said first author Pat Little, who helped conduct the research as an undergraduate at Johns Hopkins and is now a graduate student at New York University.

“Very quickly people can realize that the mime is misleading them and that there is no actual connection between what the person does and the type of line that appears,” Little said. “But even if they think, ‘I should ignore this thing because it’s getting in my way,’ they can’t. That’s the key. It seems like our minds can’t help but represent the surface that the mime is interacting with—even when we don’t want to.”

Feedback on this article? Email [email protected] or scroll down to comment.

Reference

Firestone, C., & Little, P. C. (2021). Physically implied surfaces. Psychological Science. Advance online publication. https://doi.org/10.1177/0956797620939942

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.