Preregistration Becoming the Norm in Psychological Science

A methodological revolution is underway in psychology, with preregistration at the forefront. Methodologists have made the case for the value of preregistration — the specification of a research design, hypotheses, and analysis plan prior to observing the outcomes of a study. And indeed, it is hardly radical to hold that predictions should be specified before looking at the data.

Preregistration improves research in two ways. First, preregistration provides a clear distinction between confirmatory research that uses data to test hypotheses and exploratory research that uses data to generate hypotheses. Mistaking exploratory results for confirmatory tests leads to misplaced confidence in the replicability of reported results.

Second, preregistering may reduce the influence of publication bias on effect-size estimation. Journals favor submissions that report statistically significant effects, a bias that tends to inflate estimates of effect size in the published literature. If preregistrations are posted in searchable registries, then it is possible to discover all research on a topic, not just the research that got published.

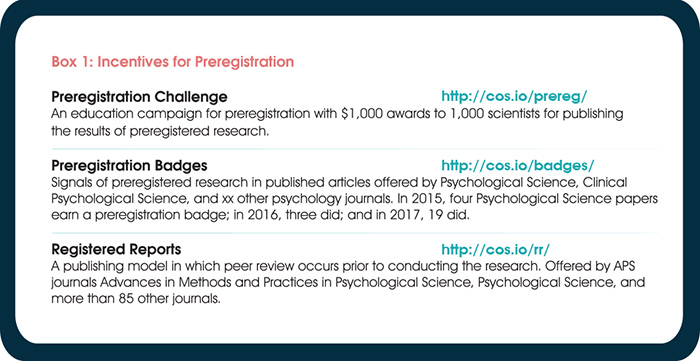

Registries are available for depositing and discovering preregistration. An emerging research community is evaluating the extent to which scientists can embrace and practice preregistration. Many journals recognize articles reporting preregistered research with badges. A related trend is for journals to adopt Registered Reports, in which preregistrations are submitted for peer review before data collection begins.

As a consequence of all this, psychological scientists are preregistering research at unprecedented and accelerating rates. Change is happening, much of it driven by APS’s journals, and there is plenty of guidance available for scientists wanting to adopt this practice. Here are some examples:

- Psychological scientist E. J. Wagenmakers and his colleagues provided the theoretical rationale for preregistration in “An agenda for purely confirmatory research” — one of the most cited articles from an influential 2012 special issue of Perspectives on Psychological Science on improving research practices.

- In a 2016 Observer story, Psychological Science Editor-in-Chief Steve Lindsay, Dan Simons, Editor of the new APS journal Advances in Methods and Practices in Psychological Science, and Clinical Psychological Science Editor Scott Lilienfeld reviewed the fundamentals of preregistration and described how it is being incorporated into publishing at APS journals.

- Brian Nosek and colleagues have just published “The Preregistration Revolution,” an article in the Proceedings for the National Academy of Sciences addressing some of the pragmatic challenges for conducting preregistration, such as what to do when the data already exist or the study is multivariate or longitudinal.

Box 2: Universities leading the COS Preregistration Challenge as of February 19, 2018. For the full list, visit https://cos.io/prereg/.

Organizations in the social and behavioral sciences field have set up registries to make it easy to preregister. These groups include the American Economic Association’s RCT registry, eGAP for political research, RIDIE for developmental economics, and the free workflow service OSF (http:osf.io) for any kind of research. Registries such as OSF enable researchers to embargo preregistrations so they can complete their research before making their designs publicly accessible. AsPredicted.com also provides an easy way to generate a preregistration, though it is not a formal registry because its preregistrations can stay private forever. That has the advantage of protecting researchers’ privacy and the disadvantage of making some research nondiscoverable.

One might hope that researchers would preregister just because it is good practice, but presuming that would mean neglecting psychology’s insights on behavior change. Adopting new behaviors is hard, particularly if they are unfamiliar and if the incentives counter the adoption. For example, maximizing publishability of findings encourages

retaining as much flexibility in analysis and reporting as possible, even at the cost of the accuracy of the results. If preregistration is to be adopted widely, the incentives for doing it will need to outweigh the incentives against it. Some of that change is occurring already.

The most direct incentive change is Registered Reports, which integrate preregistration with publication. Authors submit their question, methodology, and analysis plan for review before conducting the research. If accepted, that protocol is a preregistration of the confirmatory aspects of the study that will be published regardless of outcome as long as outcome-independent quality control criteria are met. The final paper clearly distinguishes between confirmatory tests and any exploratory findings that were examined after observing the data.

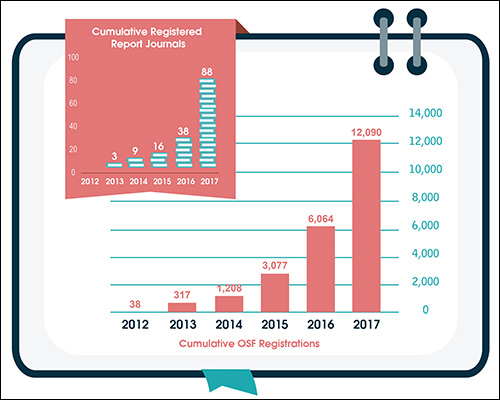

The growth in preregistration, demonstrated by the surge in journals encouraging preregistration by offering badges or Registered Reports and the total number of registrations accumulating on OSF, has been dramatic. From just 38 registrations in 2012 to more than 12,000 in 2017, registrations are doubling yearly (see below).

Psychology is not the only community adopting preregistration, but psychological scientists are leading the way in initiating the behavior and in evaluating its effectiveness for improving research practices. Ongoing self-study of research practices will foster continuous improvement and thereby accelerate the pace of discovering replicable phenomena and determining the factors that modulate their occurrence and size.

Comments

Utter garbage. Preregistration does nothing to solve the terminal problems for psychological research. Rather, it’s a typical clerical-psychology approach .. which has the veneer of a solution while enhancing the status of its protagonists, but actually providing a flim-flam solution for what is fast becoming a flim-flam-dominated discipline. Scientists do not need this kind of clerical make-work; rather, we go about our business with scientific integrity and utter honesty in how we undertake investigations and analyses of data, and report them accordingly – something many psychologists cannot do without spin, hand-waving, mad assumptions about quantity, and invoking the mythology of ad-hoc latent variables. Pre-registration is about as much use (or meaningful sense) as Basil Fawlty’s suitcase full of hippopotamus sauce. When will so many psychologists begin to understand what scientific investigation actually requires of us? It’s certainly not “pre-registration” of an investigation.

Preregistering is a tool that promotes integrity and discourages hand waving. The clerical burden is not so great, and much of the effort invested in preparing a detailed preregistration is repaid in time saved when analyzing the data. If the results do not turn out to be sufficiently informative to warrant submission for publication, then the preregistration and data file together create a useful lab archive of the study. If the findings do warrant publication, then the preregistration can serve as a draft Method section.

Preregistering is less important in some domains of psychology than others. Psychophysics, for example, benefits from well-developed formal models, reliable measures, and a tradition of direct replication, a combination that greatly reduces concerns regarding researcher degrees of freedom regarding sample sizes, exclusions, transforms, and analyses (see Scholl, http://perception.yale.edu/papers/17-Scholl-APSObserver.pdf ). But those features also make it trivially easy for psychophysicists to do preregistrations. If a lab has various standard operating procedures, those need be documented only once and thereafter merely pointed to. Similarly, the preregistration for an in-house replication can often be completed in a few minutes.

Preregistration is certainly not a cure-all. It is a easy small step that helps reduce the risk of misleading uses of inferential statistics. The much harder problems have to do with developing better measures and better theories. But specifying sample size, exclusion rules, transforms, predictions, and analyses in advance of data collection will, I believe, help psychologists develop a foundation of replicable phenomena that will provide a sound basis for developing such measures and theories.

“which has the veneer of a solution while enhancing the status of its protagonists, but actually providing a flim-flam solution for what is fast becoming a flim-flam-dominated discipline”

You obviously underestimate the magical qualities of pre-registration. Take “Registered Reports” for instance:

After years of talking about how the bad state of psychological science was partly due to reviewers, editors, and journals pressuring researchers into leaving out analyses, conditions, and measures in their papers in order to “tell a nicer story”, the people behind Registered Reports thought it was a good idea to have “peer-review only” pre-registration where all the crucial pre-registration information can subsequently be kept a secret from the reader. Several “Registered Reports” have already been published with “peer-review only” pre-registration, where the reader has no access to the registration information via inclusion of a simple link in the paper for instance.

This was/is all done under the guise of “improving” science, “open science”, and being “transparent”. In their words “it is rigorous in a way that conventional unreviewed registration is not.”

The irony of essentially trusting the same people who apparently have helped cause this mess in psychological science in the first place, and removing 2 crucial aspects of pre-registration (transparency and accountability) under the guise of “improvement” and “transparency” seems to have been lost on them…

Also see: http://andrewgelman.com/2017/09/08/much-backscratching-happy-talk-junk-science-gets-share-reputation-respected-universities/#comment-560672

Two remarkable things may have come from this all though:

1) I actually applaud the people behind “Registered Reports” for doing what i thought was impossible. They may have actually made (psychological) science even worse by being an active part of creating and introducing a format that essentially makes the 2 most important things about pre-registration (transparency and accountability) magically vanish.

And 2) As a clinical psychologist i always wanted to try and help people with psychological disorders. I value rigorous research in light of that, and wanted to try and help improve psychological science. One year out of university, without having been able to get a job, and living back with my mother, i started helping out with the Open Science Collaboration from a tiny room in the attic on an old computer.

Now however, i actually have lost all hope and faith in large part as a result of this “Registered Report” debacle. I am now even not sure whether i have been part of a solution or of creating an even bigger mess. Regardless: i am done with it all.

Both realizations are pretty remarkable in my opinion, and i applaud the people behind “Registered Reports” for having helped achieve that.

That is all, thank you.

Hi Alex,

Thanks for your comments and for pointing out shortcomings as they arise, it provides the necessary push to instigate improvements to any process, new or old. Editors working on Registered Reports are now more aware of the need to ensure that the submitted plans do actually get into a registry (or published by the journal itself). On the OSF, we have a new registration form specifically for accepted stage 1 protocols (http://help.osf.io/m/registrations/l/524206-understand-registration-forms) and are about to release a page that will simplify the creation of a project with one of these registered stage 1 protocols (in the same way that this page https://osf.io/prereg guides a researcher right to a preregistration template).

You can also see how the shortcoming you mention is being addressed by publishers, who are pushing for best practices in this format. Wiley recently released these standards for their journals that are accepting RRs: https://authorservices.wiley.com/author-resources/Journal-Authors/submission-peer-review/registered-reports-policy.html

So all in all, the format is not perfect. Meta-scientists are evaluating the effect that RRs have on transparency and reproducibility, and shortcomings that you find or that other researchers find will be used to improve the format in the future. These sisyphean tasks can be draining, but we (you, us, and others) all do it for the cause of increasing more credible research.

Best,

David

Thank goodness this is happening! This is a good step towards making psychology a more scientific discipline — the relatively “unscientific state” of psychology having disturbed me ever since I came into the field many years ago after obtaining a solid background in the hard sciences.

If psychologists really want to avoid hand waving & data dependent selections, why don’t they have blind data analysis? Either do the data analysis blindly, or let an independent group do it. Preregistration in clinical trials goes with blindness.

In psychology, however, even if this is solved, there’s the question of linking statistical results from studies to the actual phenomenon of interest.

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.