It Doesn’t Take a Scientist To See Through Implausible Research

Can people who have not been trained in psychological science predict whether new studies will obtain the same results as existing social-science research? They can, especially if the research hypothesis seems dubious, according to a new study in Advances in Methods and Practices in Psychological Science.

New studies by independent labs have failed to replicate many key findings from the social-science literature. Some of those failures have been attributed to questionable individual research practices and others to problems affecting the whole field, such as publication bias (when the decision to publish a study depends on the result obtained) and the “publish or perish” culture that is prevalent in academia. More recently, another factor has been associated with poor replicability: implausible research hypotheses.

“If the a priori implausibility of the research hypothesis is indicative of replication success, then replication outcomes can be reliably predicted from a brief description of the hypothesis at hand,” write Suzanne Hoogeveen, Alexandra Sarafoglou, and APS Fellow Eric-Jan Wagenmakers (University of Amsterdam). Previous studies showed that individuals with a PhD in the social sciences can predict replication findings with above-chance accuracy. The authors set out to learn whether laypeople (those without a PhD in psychology or a professional background in the social sciences) could do so as well.

To address this question, Hoogeveen and colleagues used the online platform Amazon Mechanical Turk, social media platforms such as Facebook, and their university’s pool of online participants (first-year psychology students) to recruit 257 participants. The researchers showed participants descriptions of 27 studies that had been included in two large-scale collaborative replication projects: the Social Sciences Replication Project (Camerer et al., 2018) and the Many Labs 2 project (Klein et al., 2018). Of the 27 studies, 14 had been successfully replicated and 13 had not. Each description included the study’s hypothesis, how it was tested, and the key finding. A description-plus-evidence condition also included the Bayes factor, which indicates the strength of the evidence for the hypothesis, and a verbal interpretation of it (e.g., “moderate evidence”). After reading each description, participants indicated whether they believed the study would be replicated successfully.

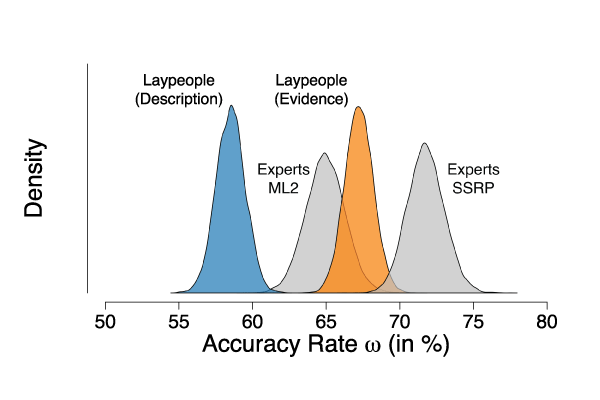

Participants were accurate 59% of the time when predicting replication on the basis of the description alone. In the description-plus-evidence condition, their predictive accuracy went up to 67%.

These findings suggest that “the intuitive plausibility of scientific effects may be indicative of their replicability,” write Hoogeveen and colleagues. However, “laypeople’s predictions should not be equated with the truth.” A signal-detection analysis suggested that one reason these predictions were not even more accurate was that participants tended to be optimistic about outcomes, including study replicability. However, that analysis also showed that participants’ above-chance accuracy was not due to any response bias but reflected their ability to discriminate between different types of information.

Taken together, the “results provide empirical support for the suggestion that intuitive (i.e., unsurprising) effects are more replicable than highly surprising ones, as replicable studies were in fact deemed more replicable than nonreplicable studies by a naive group of laypeople,” add the authors.

Hoogeveen and colleagues suggest that laypeople’s predictions could contribute to replication research—for example, by helping researchers to identify which observed effects are the least likely to replicate and should be further tested. They conclude that “the scientific culture of striving for newsworthy, extreme, and sexy findings is indeed problematic, as counterintuitive findings are the least likely to be replicated successfully.”

References

Camerer, C. F., Dreber, A., Holzmeister, F., Ho, T. H., Huber, J., Johannesson, M., Kirchler, M., Nave, G., Nosek, B. A., Pfeiffer, T., Altmejd, A., Buttrick, N., Chan, T., Chen, Y., Forsell, E., Gampa, A., Heikensten, E., Hummer, L., Imai, T., . . . Wu, H. (2018). Evaluating the replicability of social science experiments in Nature and Science between 2010 and 2015. Nature Human Behaviour, 2(9), 637–644. https://doi.org/10.1038/s41562-018-0399-z

Hoogeveen, S., Sarafoglou, A., & Wagenmakers, E. J. (2020). Laypeople can predict which social-science studies will be replicated successfully. Advances in Methods and Practices in Psychological Science. Advance online publication. https://doi.org/10.1177/2515245920919667

Klein, R. A., Vianello, M., Hasselman, F., Adams, B. G., Adams, R. B., Jr., Alper, S., Aveyard, M., Axt, J. R., Babalola, M. T., Bahník, Š., Batra, R., Berkics, M., Bernstein, M. J., Berry, D. R., Bialobrzeska, O., Binan, E. D., Bocian, K., Brandt, M. J., Busching, R., . . . Nosek, B. A. (2018). Many Labs 2: Investigating variation in replicability across samples and settings. Advances in Methods and Practices in Psychological Science, 1(4), 443–490.

https://doi.org/10.1177/2515245918810225

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.