Psychological Science Explains Uproar over Prostate-Cancer Screenings

WASHINGTON— The uproar that began last year when the U.S. Preventive Services Task Force stated that doctors should no longer offer regular prostate-cancer tests to healthy men continued this week when the task force released their final report. Overall, they stuck to their guns, stating that a blood test commonly used to screen for prostate cancer, the PSA test, causes more harm than good — it leads men to receive unnecessary, and sometimes even dangerous, treatments.

But many people simply don’t believe that the test is ineffective. Even faced with overwhelming evidence, such as a ten-year study of around 250,000 men that showed the test didn’t save lives, many activists and medical professionals are clamoring for men to continue receiving their annual PSA test. Why the disconnect?

In an article published in Psychological Science, a publication of the Association for Psychological Science, researchers Hal R. Arkes, of Ohio State University, and Wolfgang Gaismaier, from the Max Planck Institute for Human Development in Berlin, Germany, picked apart laypeople’s reactions to the report, and examined the reasons why people are so reluctant to give up the PSA test.

“Many folks who had a PSA test and think that it saved their life are infuriated that the Task Force seems to be so negative about the test,” said Arkes.

They suggest several factors that may have contributed to the public’s condemnation of the report. Many studies have shown that anecdotes have power over a person’s perceptions of medical treatments. For example, a person can be shown statistics that Treatment A works less frequently than Treatment B, but if they read anecdotes (such as comments on a website) by other patients who had success with Treatment B, they’ll be more likely to pick Treatment B. The source of the anecdotes matters too. If a friend, a close relative, or any trusted source received successful treatment, they would be more likely to recommend that treatment to others, even if there was evidence showing the treatment only works for a minority of people.

Arkes and Gaismaier also propose that the public may have recoiled against the task force’s recommendations so fiercely because they weren’t able to properly evaluate the data in the report. Confusion over the use of control groups may have led people in the general public to weigh the data differently than medical professionals did.

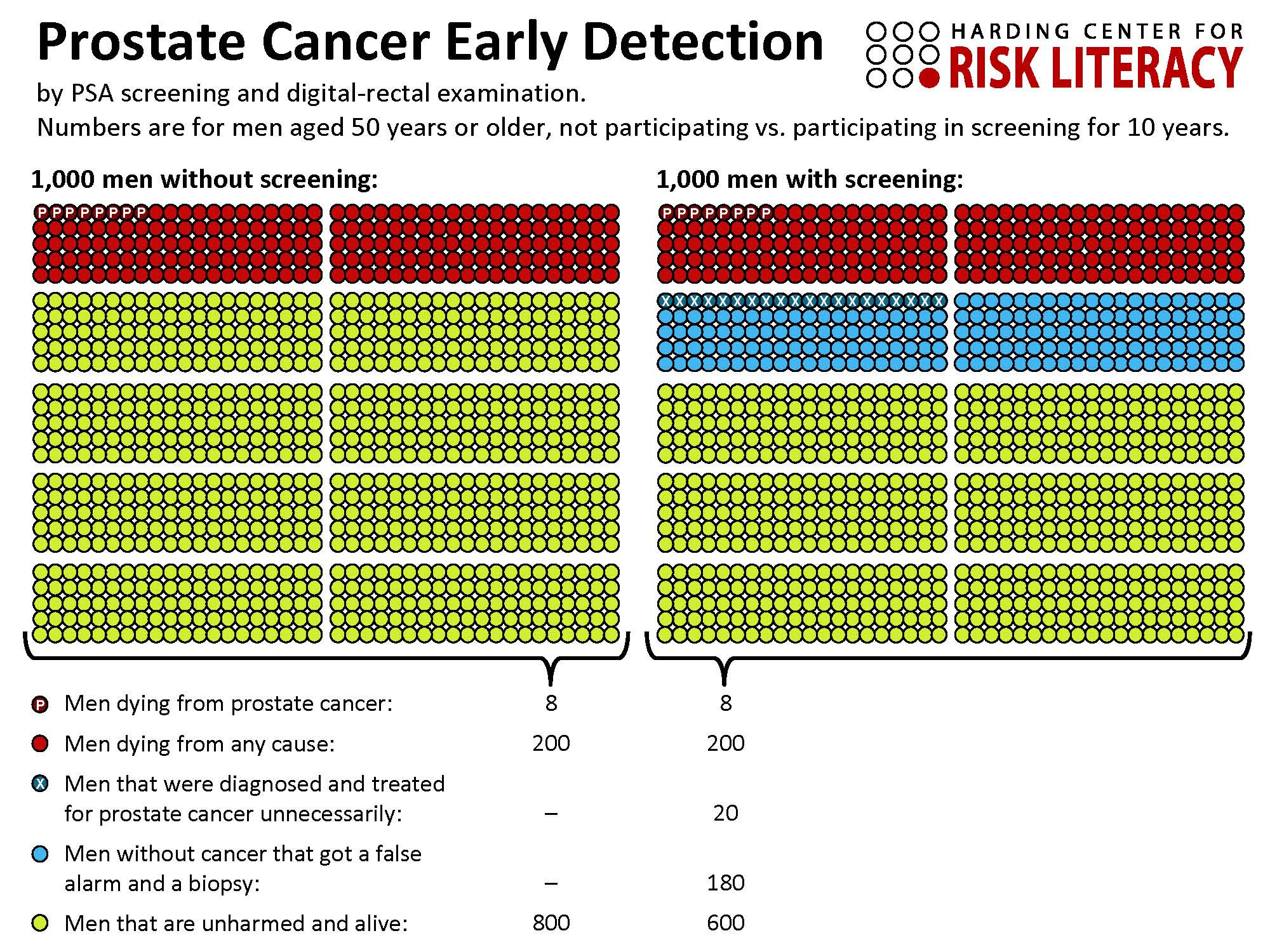

Icon array illustrating the benefits (or lack thereof) and harms of prostate-specific antigen (PSA) screening for men age 50 and older. The underlying epidemiological data are taken from Djulbegovic et al. (2010). Note that the numbers are not meant to be the final verdict on PSA screening, but rather serve to illustrate the order of magnitude of the effects. Copyright 2012 by the Harding Center for Risk Literacy.

“How to change this is the million-dollar question,” said Arkes. “Pictorial displays are far easier to comprehend than statistics. The two figures in our article depict the situation more clearly than text and numbers can do. I think data displayed in this manner can help change people’s view of the PSA test because we compare the relative outcomes of being tested and not being tested. Without that comparison, it is tough for the public to appreciate the relative pluses and minuses of the PSA test versus not having the PSA test.” An example of this kind of display (Figure 3 from the study) is shown at left.

Men will be able to continue to request the PSA test, and it will be covered by health insurance for the foreseeable future. But psychological science suggests that unless people are convinced to choose statistics over anecdotes, confusion surrounding the test’s effectiveness will linger.

***

Authors’ notes about the figure:

1. In light of the most favorable evidence related to PSA screening, the number of men dying from prostate cancer could be reduced from 8 to 7 out of 1,000, but in light of the less favorable evidence, there is no such reduction. In any case, overall mortality was shown not to be reduced at all as a result of screening in all trials.

2. Credit should also be given to Lisa Schwartz and Steven Woloshin at Dartmouth Medical School for the conceptual development and evaluation of drug facts boxes and for providing idea of how a facts box could look like in the case of PSA screening. While the Harding Center has developed this particular facts box, it is standing on the shoulders of giants.

3. Credit also goes to Angela Fagerlin and others for showing the debiasing effect of icon arrays.

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.