Presidential Column

Bayes for Beginners: Probability and Likelihood

Some years ago, a postdoctoral fellow in my lab tried to publish a series of experiments with results that — to his surprise — supported a theoretically important but extremely counterintuitive null hypothesis. He got strong pushback from the reviewers. They said that all he had were insignificant results that could not be used to support his null hypothesis. I knew that Bayesian methods could provide support for null hypotheses, so I began to look into them. I ended up teaching a Bayesian-oriented graduate course in statistics and now use Bayesian methods in analyzing my own data.

Some years ago, a postdoctoral fellow in my lab tried to publish a series of experiments with results that — to his surprise — supported a theoretically important but extremely counterintuitive null hypothesis. He got strong pushback from the reviewers. They said that all he had were insignificant results that could not be used to support his null hypothesis. I knew that Bayesian methods could provide support for null hypotheses, so I began to look into them. I ended up teaching a Bayesian-oriented graduate course in statistics and now use Bayesian methods in analyzing my own data.

When I look back on the formulation of the statistical inference problem I was taught and used for many years, I am astonished that I saw no problem with it: To test our own hypothesis, we test a different hypothesis — the null hypothesis. If it fails, we conclude that our hypothesis is correct — without testing it against the data and without formulating it with the same exactitude with which we formulated the hypothesis we did test (i.e., the null). Moreover, we understand a priori that the null hypothesis can never be accepted; the best it can do is not be rejected. (“You cannot prove the null.”) The realization of these absurdities made me a Bayesian.

There is a sense these days that Bayesian data analysis is a coming thing, so colleagues often consult me about it. In these consultations, I am struck by how much misunderstanding there is about basics. In searching for something to write about that would be of general interest, I settled on presenting the basics of Bayesian data analysis in what I hope is an accessible form.

Distinguishing Likelihood From Probability

The distinction between probability and likelihood is fundamentally important: Probability attaches to possible results; likelihood attaches to hypotheses. Explaining this distinction is the purpose of this first column.

Possible results are mutually exclusive and exhaustive. Suppose we ask a subject to predict the outcome of each of 10 tosses of a coin. There are only 11 possible results (0 to 10 correct predictions). The actual result will always be one and only one of the possible results. Thus, the probabilities that attach to the possible results must sum to 1.

Hypotheses, unlike results, are neither mutually exclusive nor exhaustive. Suppose that the first subject we test predicts 7 of the 10 outcomes correctly. I might hypothesize that the subject just guessed, and you might hypothesize that the subject may be somewhat clairvoyant, by which you mean that the subject may be expected to correctly predict the results at slightly greater than chance rates over the long run. These are different hypotheses, but they are not mutually exclusive, because you hedged when you said “may be.” You thereby allowed your hypothesis to include mine. In technical terminology, my hypothesis is nested within yours. Someone else might hypothesize that the subject is strongly clairvoyant and that the observed result underestimates the probability that her next prediction will be correct. Another person could hypothesize something else altogether. There is no limit to the hypotheses one might entertain.

The set of hypotheses to which we attach likelihoods is limited by our capacity to dream them up. In practice, we can rarely be confident that we have imagined all the possible hypotheses. Our concern is to estimate the extent to which the experimental results affect the relative likelihood of the hypotheses we and others currently entertain. Because we generally do not entertain the full set of alternative hypotheses and because some are nested within others, the likelihoods that we attach to our hypotheses do not have any meaning in and of themselves; only the relative likelihoods — that is, the ratios of two likelihoods — have meaning.

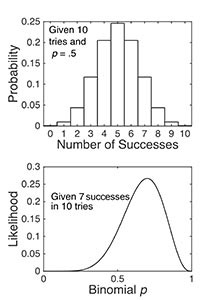

Figure 1. The binomial probability distribution function, given 10 tries at p = .5 (top panel), and the binomial likelihood function, given 7 successes in 10 tries (bottom panel). Both panels were computed using the binopdf function. In the upper panel, I varied the possible results; in the lower, I varied the values of the p parameter. The probability distribution function is discrete because there are only 11 possible experimental results (hence, a bar plot). By contrast, the likelihood function is continuous because the probability parameter p can take on any of the infinite values between 0 and 1. The probabilities in the top plot sum to 1, whereas the integral of the continuous likelihood function in the bottom panel is much less than 1; that is, the likelihoods do not sum to 1.

Using the Same Function ‘Forwards’ and ‘Backwards’

The difference between probability and likelihood becomes clear when one uses the probability distribution function in general-purpose programming languages. In the present case, the function we want is the binomial distribution function. It is called BINOM.DIST in the most common spreadsheet software and binopdf in the language I use. It has three input arguments: the number of successes, the number of tries, and the probability of a success.

When one uses it to compute probabilities, one assumes that the latter two arguments (number of tries and the probability of success) are given. They are the parameters of the distribution. One varies the first argument (the different possible numbers of successes) in order to find the probabilities that attach to those different possible results (top panel of Figure 1). Regardless of the given parameter values, the probabilities always sum to 1.

By contrast, in computing a likelihood function, one is given the number of successes (7 in our example) and the number of tries (10). In other words, the given results are now treated as parameters of the function one is using. Instead of varying the possible results, one varies the probability of success (the third argument, not the first argument) in order to get the binomial likelihood function (bottom panel of Figure 1). One is running the function backwards, so to speak, which is why likelihood is sometimes called reverse probability.

The information that the binomial likelihood function conveys is extremely intuitive. It says that given that we have observed 7 successes in 10 tries, the probability parameter of the binomial distribution from which we are drawing (the distribution of successful predictions from this subject) is very unlikely to be 0.1; it is much more likely to be 0.7, but a value of 0.5 is by no means unlikely. The ratio of the likelihood at p = .7, which is .27, to the likelihood at p = .5, which is .12, is only 2.28. In other words, given these experimental results (7 successes in 10 tries), the hypothesis that the subject’s long-term success rate is 0.7 is only a little more than twice as likely as the hypothesis that the subject’s long-term success rate is 0.5.

In summary, the likelihood function is a Bayesian basic. To understand likelihood, you must be clear about the differences between probability and likelihood:

Probabilities attach to results; likelihoods attach to hypotheses. In data analysis, the “hypotheses” are most often a possible value or a range of possible values for the mean of a distribution, as in our example.

The results to which probabilities attach are mutually exclusive and exhaustive; the hypotheses to which likelihoods attach are often neither; the range in one hypothesis may include the point in another, as in our example.

To decide which of two hypotheses is more likely given an experimental result, we consider the ratios of their likelihoods. This ratio, the relative likelihood ratio, is called the “Bayes Factor.”

Comments

I think that this is an excellent introduction. Could we have more, please?

Dave Howell

This is wonderfully clear and illuminating. I look forward to reading more!

I am afraid this column makes a couple of confusing claims.

One claim is that the results to which probabilities attach are mutually exclusive whereas the hypotheses to which likelihoods attach are often neither. I see no basis for making this distinction. It is very common that one considers probabilities of results that are not mutually exclusive (e.g., the result of getting at least five coin tosses correct overlaps with the result of getting at least six coin tosses correct).

The other confusing claim is more serious. It is claimed that “given that we have observed 7 successes in 10 tries, the probability parameter of the binomial distribution from which we are drawing (the distribution of successful predictions from this subject) is very unlikely to be 0.1”. This kind of claim is known as the “fallacy of the transposed conditional”. What the likelihood function tells us is that we are unlikely to obtain the result of 7 successes in 10 tries when the underlying parameter is 0.1. It does *not* tell us that it is very unlikely for the underlying parameter to be 0.1 given the result of 7 successes in 10 tries. (Indeed, if prior to obtaining this result we somehow fixed the parameter to have the value 0.1, we can be confident that the parameter indeed has the value 0.1, in which case this is not at all unlikely!) The distinction between this so called “posterior probability” and the likelihood function lies at the core of Bayesian statistics.

This is an exellent. Can we read more?

As regards the first of Kimmo Erikson’s objections: a fundamental property of a probability distribution is that it sum or integrate to 1. This is a direct consequence of the fact that the support for a probability distribution (the set of possibilities for which it defines probabilities) are mutually exclusive and exhaustive. In my effort to be accessible, if failed to make it sufficiently clear that I was referring to the probabilities in a probability distribution, not to the probabilities obtained by integrating over various portions of such a distribution, which are the probabilities allied to in the preferred counterexample.

As regards the second objection: I stick by what I said; the likelihood function gives the relative likelihoods of different values for the parameter(s) of the distribution from which the data are assumed to have been drawn, given those data. Given, 7 out of 10 successes, the likelihood function tells us that p = 0.1 is relatively very unlikely. That prior knowledge of its actual value would change the posterior likelihood is, of course, not in question. If we have fixed the value of p at 0.1, then the prior odds (NB, not the prior distribution) in favor of this value are infinite, in which case, of course, the data are irrelevant. No data can produce a Bayes Factor that will countervail infinite prior odds.

Thank you for your help. I’m still, useful and easy to understand article. A doubt, what would be the prior probability ? Dos that refer to the likelihood ?

Thank you for putting up this article which has explained it very clearly.

This one gave me a great understanding of these two terms in comparison to every other sources that I read.

I request you to write more about such topics.

For the probability, why does the number of tries need to be known? In looking up the probability, on any distribution that I typically use, of X number of events (successes) occurring for any given mean is all that is required.

I attended an APS workshop on Bayesian Statistics using the JASP software. This is the most exciting advance in statistics in my lifetime. The theory is a little counter-intuitive if you have been null hypothesis testing for decades. However, the workshop and the youtube videos on using Bayes on JASP make it easy.

Very clear tutorial. I hope to find more.

I am trying to learn Bayesian statistics, and the definition given for likelihood differs from how I have seen the term used.

The basic equation can be written: P(X|Y) = P(Y|X)*P(X)/P(Y), X is the parameters and Y is the data. The equation is described as: Posterior = Likelihood * Prior/ Evidence.

The likelihood term, P(Y|X) is the probability of getting a result for a given value of the parameters. It is what you label probability. The posterior and prior terms are what you describe as likelihoods.

RE: “The likelihood term, P(Y|X) is the probability of getting a result for a given value of the parameters. It is what you label probability.”

A problem may exist in referring to “P(Y|X)” as “The likelihood term”. “P(Y|X)” is generally referred to as a conditional probability, not a “likelihood”.(Note use of the letter “P” rather than “L”.) P(Y|X) is usually described as the probability of the event “Y” occurring, given that the event “X” has been actually observed.

the likelihood is not a probability distribution, unless normalized it might not sum up to 1. Do not mix likelihoods and conditional probabilities.

This description of likelihood as a relative measure between two hypotheses has helped me to (maybe?) get an initial grasp – after having flailed through a half dozen other attempts on wiki, statsexchange, mathoverflow, quora, etc. as well as a few university texts.

Wow, this article is crystal clear about the difference between likelihood and probability. Very helpful!

Thank you for posting this! Perfect for someone who doesn’t have a background in stats.

– I’m trying to use

P(H|E) = P(E|H)P(H)/(P(E|H)P(H)+ P(E|~H)P(~H)). I’ve been calling P(E|H) the likelihood of E given H. I’m told that I’m not using the term (likelihood) correctly. What should I be calling it?

This article helped to crystallize a nascent idea that had been forming in my mind, but I couldn’t yet articulate. Probability is about a finite set of possible outcomes, given a probability. Likelihood is about an infinite set of possible probabilities, given an outcome. Thanks much for helping me get this.

In ordinary English, there is no diference. Meriam-Webster defines each by the other. Whether a difference exists is an empirical question:

“To decide which words to include in the dictionary and to determine what they mean, Merriam-Webster editors study the language as it’s used. They carefully monitor which words people use most often and how they use them.” https://www.merriam-webster.com/help/faq-words-into-dictionary

Do English speakers use the terms interchangeably? Do they seem to recognize a distinction? And so on. Given Meriam-Webster defined each by the other, I’m guessing Meriam-Webster’s monitoring revealed people overwhelmingly use the two words interchangeably.

You (or your discipline) defined the words as distinct, or at least in a manner that, by a series of inferences, leads to the conclusion they are distinct:

“Probability attaches to possible results; likelihood attaches to hypotheses.” AND “Possible results are mutually exclusive and exhaustive.” AND “Hypotheses, unlike results, are neither mutually exclusive nor exhaustive” THEREFORE: Likelihood and probability are distinct.

You’re right, the distinction is fundamental, but not in any interesting way (it is basically an analytic/vacuous truth). The distinction is fundamental in the sense that it is a hidden assumption within your first premise. In other words, your argument is circular.

This is not necessarily a bad thing. I understand ordinary language words can help people conceptualize scientific and mathematic concepts. But science/mathematics and ordinary languages are different languages. “Likelihood” as used in your essay is a difference word than the ordinary English version.

While intended to help conceptualize scientific/mathematic concepts, science’s adoption of ordinary English terms often leads to confusion, and ultimately pointless debates where people talk past each other. It’s a trap we’ve all fallen into before.

Physics has learned this lesson, hence “gluon”, “quark”, etc.

Thanks to Karey Lakin for pointing out just how maddeningly confusing all of this is to natural language speakers.

Apparently, we need some way to distinguish between the probability of a given result (say, that a judge will rule in favor of the plaintiff on a motion to dismiss in a lawsuit) and the probability that a given hypothesis about how the judge will rule is correct (say, the probability that the judge will rule for the plaintiff because the plaintiff has bribed the judge). If that is not the distinction you are making, then I have not understood the difference correctly. For speakers of standard English, that would be a better way to put it because as Karey points out “probability” and “likelihood” mean exactly the same thing. To use them with distinct and different meanings requires everyone to unlearn and relearn, causing the brain to fight against the new meaning every time the word is used, setting up a kind of cognitive dissonance.

I do not think that testing the null is by all means an “absurdity” and I also quite disagree with the phrase “You cannot prove the null”. This is why people nourish a binary reasoning environment. After all pvalues are probabilities given a model, and guess what if you plot a pvalue function, you will see what parameter values are more supported by the data.

APS regularly opens certain online articles for discussion on our website. Effective February 2021, you must be a logged-in APS member to post comments. By posting a comment, you agree to our Community Guidelines and the display of your profile information, including your name and affiliation. Any opinions, findings, conclusions, or recommendations present in article comments are those of the writers and do not necessarily reflect the views of APS or the article’s author. For more information, please see our Community Guidelines.

Please login with your APS account to comment.